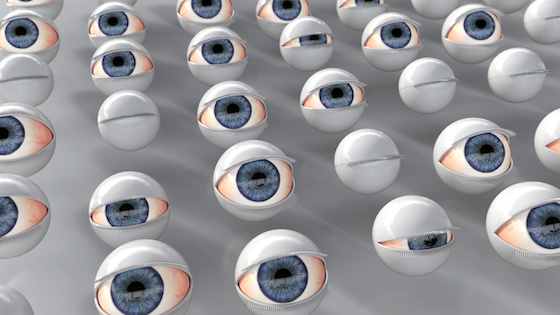

Hackaday reports that Italian roboticist are working on getting robots to blink naturally. Not blinking, they report, or blinking in non-human ways, can create that feeling of uncanniness that makes people uncomfortable with robots. Natural blinking behavior, on the other hand, makes people think the robots are more intelligent and more likeable.

Disney seems to have mastered the trick.

But Hackaday readers had some deeper comments on the idea. “Simpler solution is to not put faces and eyes on robots,” said one commenter after a string of suggestions for fine-tuning robot blinking. “Nobody complains about their Roomba not blinking.”

Another commenter took it further. “It is unethical to try to make people ‘like’ your robot, or anthropomorphize it in any way. It contributes to, and normalizes, the technology of manipulation.”

And this is part of the problem.

Likable robots

“Being perceived as intelligent can be advantageous for the effectiveness of a robot specifically in situations in which it has to transmit information in a social context, e.g. when the robot is used as a guide or informant in shopping malls, train stations or in tourist information centers,” said an earlier researcher. “A robot that is perceived [as] more intelligent is likely to be considered more competent and trustworthy in these cases.”

While we don’t usually get sentimental about our CNC machines or conveyor belts, social robots, collaborative robots, and robots in healthcare and educational settings are more useful when people like and trust them.

A number of researchers have been working on this. Changing the words the robots say, changing their physical appearance, and giving them more natural nonverbal communication are all strategies being tested.

But is it manipulative to design robots to elicit positive reactions from human beings? Roboticists creating and testing this type of robotic skill usually describe a situation like a companion robot for an elderly person or a healthcare robot needing to be able to persuade people to take actions like accepting medication.

But once those uses are commonplace, what is to stop people from programming robots to fleece marks in casinos or sway voters waiting in lines in polling places? AI could bring these examples about sooner than anticipated.

Ethical quandaries

Robots that appear to have emotions not only present the danger of manipulation by bad actors, but they could create ethical problems as well.

If a robot can simulate emotions, can we continue to treat it like a machine? Would that desensitize the person who does so and encourage cruelty to humans?

Researchers have found that realistic, human-like robots elicit fear and feelings of being threatened in some people. Is it acceptable to create machines that scare people?

For many of the researchers, the thrill is in figuring things out. They may not think about the ethical issues until it’s too late. Only time will tell.

Meanwhile, when you need assistance with your Rexroth industrial motion control, we are the ones to call.

- Call (479) 422-0390 for immediate assistance.